This post summarized the layer-fMRI abstracts form major relevant conferences 2024, including ISMRM. This is following the posts of previous years 2025, 2024, 2023, 2022, 2021, 2020, 2019. Edit suggestions are welcome (renzohuber@gmail.com)

Continue reading “layer-fMRI abstracts 2026”Author: renzohuber

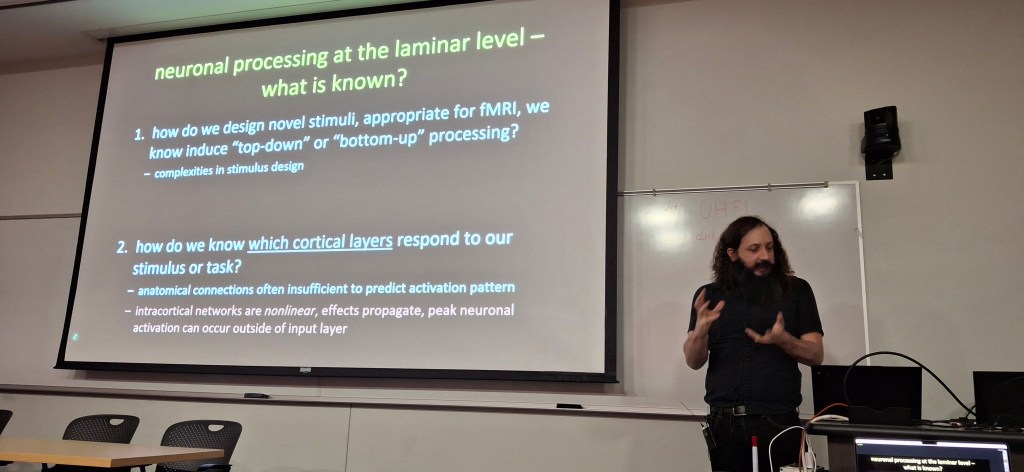

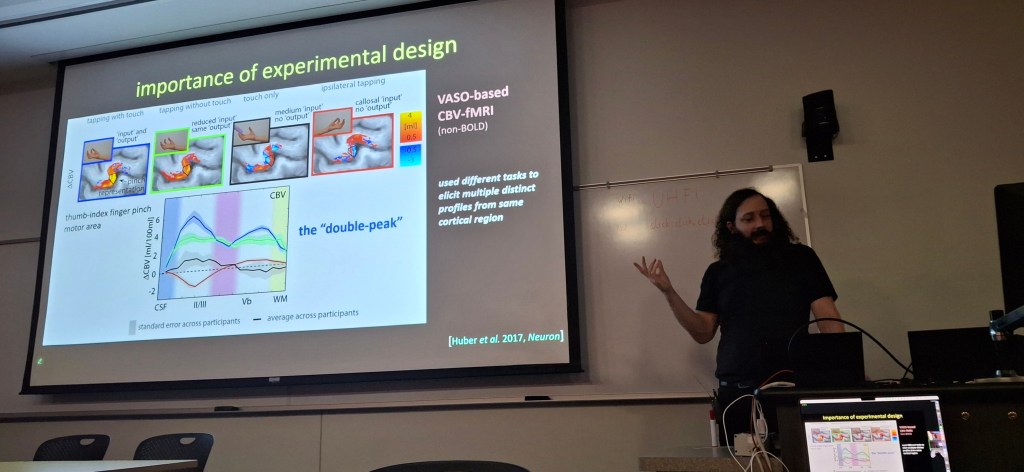

Video recordings of Laminar fMRI Course at Martinos Center 2023

October 2-5 2023 @ Martinos Center in Boston, MA

The combination of ultra-high field (7 Tesla and above) imaging with increasingly sophisticated data analysis tools has led to a surge of research using functional MRI acquisitions to examine the behavior of individual cortical layers of the brain. This course will focus on teaching the acquisition and analysis tools needed to contribute to this research.

Content: four days of hands-on training on laminar fMRI

day 1: introduction to laminar fMRI & basic data acquisition

day 2: data preprocessing and analysis

day 3: interpretation and modeling

day 4: advanced applications and future directions

layer-fMRI abstracts 2025

Seventh layer-fMRI dinner: Translational Applications of layer-fMRI.

On August 22nd 2024, we will host the 7th virtual layer-fMRI dinner. As usual, it’s free, just follow the link below.

The 7th layer-fMRI dinner will focus on Translational Applications of layer-fMRI.

For pages of previous dinners see here: https://layerfmri.com/dinners/

Continue reading “Seventh layer-fMRI dinner: Translational Applications of layer-fMRI.”OHBM 2024 Brainhack: Hack your RF coil

This hackathon project is part of the series, Hack your Scanner, following contributions of previous years. 2022 VASO mosaic, 2021 visual exporting scanner data with QR Modem, 2020 viewing data with ASCII art on MARS with LN_INFO. This year is about hacking your RF-coil.

Continue reading “OHBM 2024 Brainhack: Hack your RF coil”

layer-fMRI abstracts 2024

3rd order shim for layer-fMRI: To get best data quality, avoid certain EPI frequencies.

On Oct 13th 2023, Nicolas Boulant presented an intriguing source of MRI image artifacts at the CMRR high field meeting in Minnesota. He suggested that the 3rd-order shim can result in amplified gradient trajectory imperfections. In low bandwidth FLASH, this can manifest as faint ghosts in the read direction shifted by a few pixels. In EPI, on the other hand, these trajectory errors can result in fuzzy ripples (low spatial frequency ghosts and shadings, not edge ghosts).

In a recent meta analysis of all openly available layer-fMRI datasets, I had found had that the fuzzy ripples are one of the main limits of high-quality layer-fMRI acquisition (see here) across vendors. So, I was curious whether the 3rd order shim might be partly related to this. In this blog post, I am describing my attempts to reproduce Nicola’s results and investigate the effect of the 3rd order shim on layer-fMRI protocols. I find that disconnecting the 3rd order shim can result in significantly better data quality. However, this finding is only visible for specific echo-spacings, which are either in the ‘forbidden frequencies’ or which have side bands in the forbidden frequencies.

This post does not imply that previous research was conducted sub-optimally. Since, it is common practice to optimize the EPI echo spacing in the piloting stage of each study, the frequencies with these artifacts are usually avoided anyway. Here, we confirm that this is a good practice.

Continue reading “3rd order shim for layer-fMRI: To get best data quality, avoid certain EPI frequencies.”Sixth layer-fMRI dinner: layer-fMRI and the academia-industry relationship

On February 8th 2024, we will host the 8th virtual layer-fMRI dinner. As usual, it’s free, just follow the link below.

The 6th layer-fMRI dinner will focus the relationship of layer-fMRI academia and industry. Layer-fMRI basic research and industry have different goals, yet they help and facilitate one another. Without each other, each of our lives would be harder.

Continue reading “Sixth layer-fMRI dinner: layer-fMRI and the academia-industry relationship”Second CMRR cdr workshop: Layer-fMRI analysis across pipelines

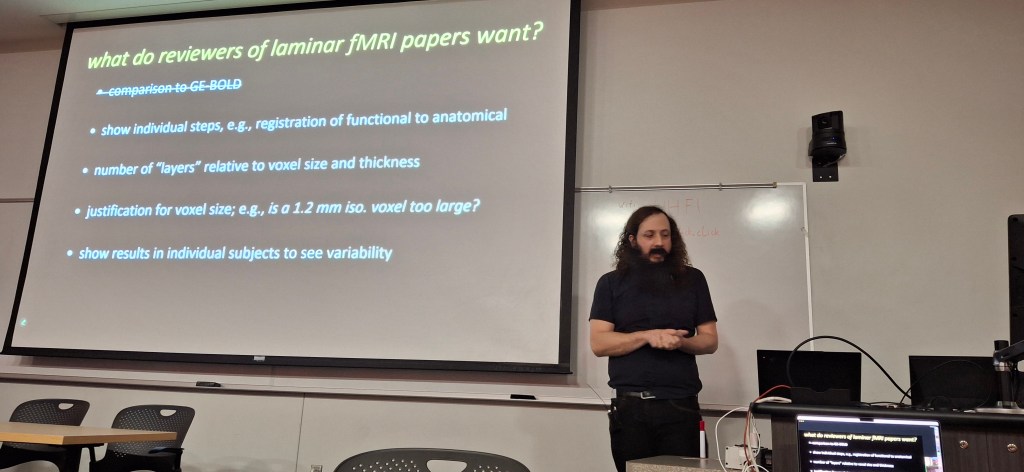

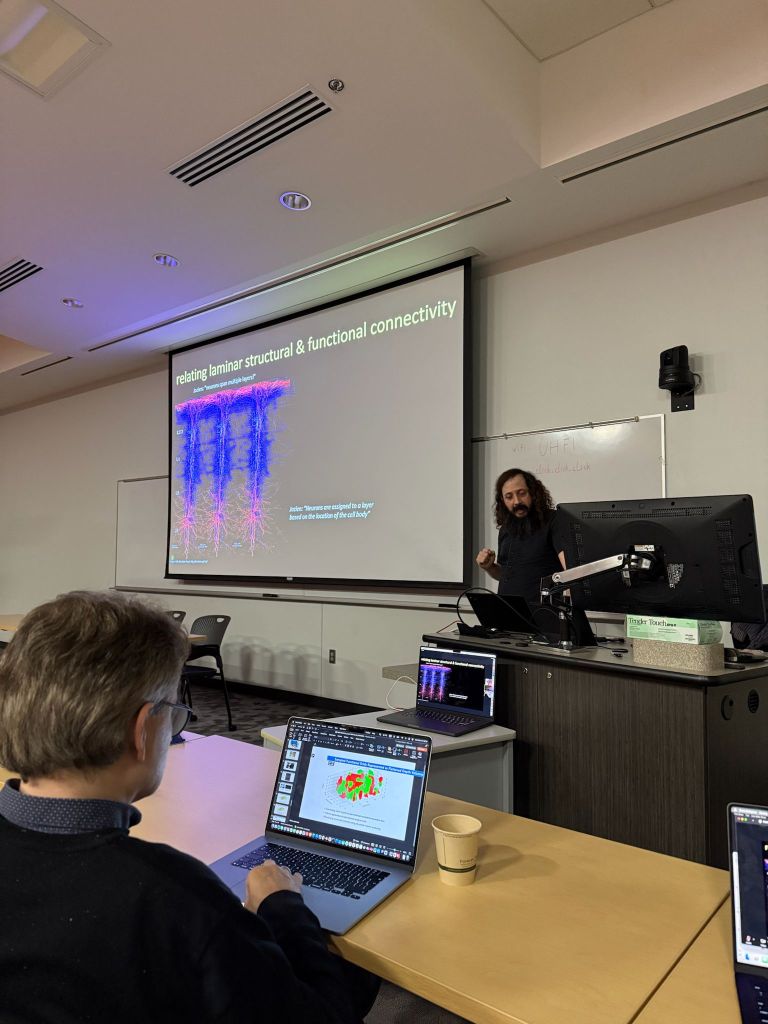

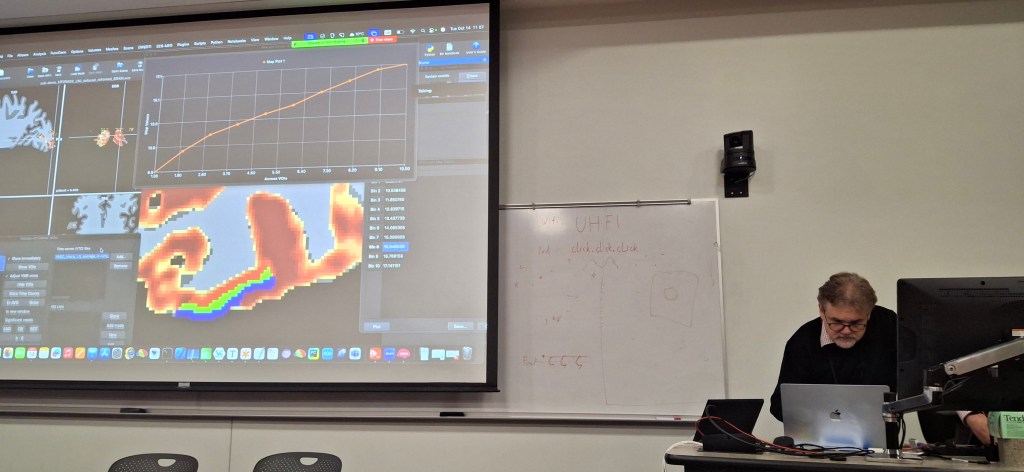

This post summarizes workshop of cortical depth resolved (cdr) fMRI, aka layer-fMRI Oct 10th-11th 2023. Official website is: https://sites.google.com/umn.edu/2023-minnesota-workshop/training/depth-resolved-layer-fmri. The slides, the data and the analysis skripts can be found here: https://doi.org/10.5281/zenodo.10004904.

The goal of this hands-on workshop was to:

- Talk about the stuff that is not in the paper!

- Show you the “ugly” parts of layer fMRI

- Provide enough knowledge to allow analyzing layer data from beginning to end

- Highlight the cool features of some of the most commonly used software

Best misheard sentence: “With Layer-fMRI, we have access to the cortical circus” (referring to circuit).

New established word inventions: “These data have been NORDICed“

The workshop featured 4 independent pipelines of layer-fMRI analysis:

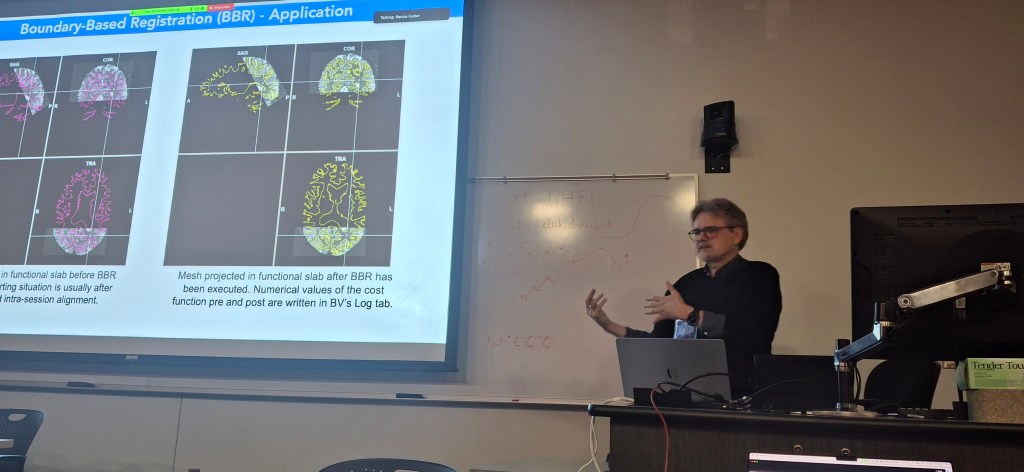

- Yulia Lazarova: BrainVoyager

- Anna Blazejewska: Freesurfer

- Renzo Huber: LayNii AFNI

- Cheryl Olman: Distrortion correction and registration

The data used here can be found on Zenodo: https://doi.org/10.5281/zenodo.10004904

Luca Vizioli: Introduction to the

Anna I Błażejewska: FreeSurfer processing pipeline for laminar fMRI

Layer-fMRI analysis in LayNii and AFNI: Renzo Huber

Cheryl Olman: Nothing fancy: working with layer data with AFNI and FreeSurfer out-of-the-box

Layer-fMRI abstracts 2023

This page collects layer-fMRI abstracts from neuro imaging conferences in 2022. This is following the tradition of layer-fMRI abstracts in the previous years: layer-fMRI abstracts 2019, layer-fMRI abstracts 2020, layer-fMRI abstracts 2021, and layer-fMRI abstracts 2022. Comments and completions are welcome (renzohuber@gmail.com).

Continue reading “Layer-fMRI abstracts 2023”The relationship of layer-fMRI with other fields: a graphical story in cynical metaphors

This is the second blog post about graphic representations of cynical metaphors. The first post on graphical metaphors was about finding best layer-fMRI sequence and can be found here. This one is about how layer-fMRI fits into the landscape of other disciplines.

Continue reading “The relationship of layer-fMRI with other fields: a graphical story in cynical metaphors”Fifth layer fMRI dinner: What can we learn about cortical layers using laminar fMRI?

On Dec 7th 2022, we will host the 5th virtual layer-fMRI dinner.

The board of the layer-fMRI dinner group has invited the following speakers to initiate discussions on the theme: Layer-fMRI signal origin: From neurons to vessels to BOLD.

- Serge Dumoulin (NINS; Utrecht University): How population receptive field properties change across cortical laminae: from vision to cognition.

- Anna Devor (Boston University): Layer-resolved imaging of resting CMRO2 in awake mice with phosphorescent O2 probes.

- Lars Muckli (University of Glasgow): From man to non-human primates and mouse: Two top-down streams in different layers of retinotopic visual cortex (the ground truth).

Moderated by Luca Vizioli and Tyler Morgan

The entire event will last for about 90 min (including discussion).

The meeting will be recorded and published on Youtube and embedded on this website by Dec 8, 2022.

Everyone is welcome. No registration required. Zoom link:

https://layerfmri.page.link/Zoom

| Los Angeles | Chicago | New York | UK | Europe | Beijing | Sydney |

| 6:00 | 8:00 | 9:00 | 14:00 | 15:00 | 22:00 | 23:00 |

Introduction

Serge Dumoulin

How population receptive field properties change across cortical laminae: from vision to cognition.

A key advantage brought by ultra-high field MRI at 7 Tesla and more is the possibility to increase the spatial resolution at which data is acquired, with little reduction in image quality. This opens a new set of opportunities for cognitive neuroscience, for example to probe how signals vary across cortical thickness and laminae. Here, I present recent work on computational modelling of population receptive field properties. I will discuss how they vary across cortical laminae, how these properties are influenced by attention and extend these protocols from primary visual cortex towards numerical cognition in association

cortex. I will also discuss some of the limitations and the potential of laminar imaging in human cortex.

Anna Devor

Layer-resolved imaging of resting CMRO2 in awake mice with phosphorescent O2 probes.

The cerebral cortex is organized in cortical layers that differ in their cellular density, composition, and wiring. Cortical laminar architecture is also readily revealed by staining for cytochrome oxidase – the last enzyme in the respiratory electron transport chain located in the inner mitochondrial membrane. It has been hypothesized that a high-density band of cytochrome oxidase in cortical layer IV reflects higher oxygen consumption under baseline (unstimulated) conditions. We tested the above hypothesis using direct measurements of the partial pressure of O2 (pO2) in cortical tissue by means of 2-photon phosphorescence lifetime microscopy (2PLM). We revisited our previously developed method for extraction of the cerebral metabolic rate of O2 (CMRO2) based on 2-photon pO2 measurements around diving arterioles and applied this method to estimate baseline CMRO2 in awake mice across cortical layers. Our results revealed a decrease in baseline CMRO2 from layer I to layer IV. This decrease of CMRO2 with cortical depth was paralleled by an increase in tissue oxygenation. Higher baseline oxygenation and cytochrome density in layer IV may serve as an O2 reserve during surges of neuronal activity or certain metabolically active brain

states rather than baseline energy needs.

Lars Muckli

From man to non-human primates and mouse: Two top-down streams in different layers of retinotopic visual cortex (the ground truth).

Using laminar fMRI, we identified two top-down processing streams. (1) One stream is for contextualizing visual input based on fast recurrent processing of contextual information and (2) a second top-down projection is used for visual imagery. Both top-down streams are based on non-direct geniculate input to visual cortex. To investigate the ‘ground-truth’ of this top-down processing, collaborators in the Human Brain Project (HBP) conducted parallel experiments in monkeys and mice using microelectrode recording and two-photon calcium imaging. The contextual feedback effects in complex visual fields are fast, dependent on learning and are likely communicated by disinhibition in superficial layers of cortex.

Complex cognitive tasks are difficult to instruct in non-human primates and in rodents, but in humans we can see that top-down feedback processing is used for visual imagery, and also object comparison and navigation.

Discussion

OHBM 2022 Hackathon project: MOSAIC for VASO fMRI

Description

Vascular Space Occupancy is an fMRI method that is popular for high-resolution layer-fMRI. Currently, the most popular sequence is the one by Rüdiger Stirnberg from the DZNE in Bonn, which is actively being employed at more than 30 sites.

This sequence concomitantly acquires fMRI BOLD and blood volume signals. In the SIEMENS reconstruction pipeline, these signals are mixed together within the same time series, which challenges its user friendliness. Specifically:

The “raw” dicom2nii-converted time-series are not BIDS compatible (see https://github.com/bids-standard/bids-specification/issues/1001).

The order of odd and even BOLD and VASO image TRs is dependent on the nii-converter.

Workarounds with 3D distortion correction, results in interpolation artifacts.

Workarounds without MOSAIC decorators result in impracticable large data sizes.

The goal of this Hackathon is to extend the 3D-MOSAIC to solve these constraints. This functor is commonly used to sort images by echo-times, by RF-channels, by magnitude and phase in the SIEMENS reconstruction pipeline into sets of mosaics . However currently, this functor does not yet support the dimensionality of SETs. In this project we seek to include SETs into the capabilities of the functor.

Continue reading “OHBM 2022 Hackathon project: MOSAIC for VASO fMRI”Layer-fMRI abstracts 2022

This page collects layer-fMRI abstracts from neuro imaging conferences in 2022. This is following the tradition of layer-fMRI abstracts in the previous years: layer-fMRI abstracts 2019, layer-fMRI abstracts 2020, and layer-fMRI abstracts 2021. Comments and completions are welcome (renzohuber@gmail.com).

Continue reading “Layer-fMRI abstracts 2022”Fourth layer fMRI dinner: Neurons → vessels → fMRI

On Oct 6th 2021, we aim to host the 4th virtual layer-fMRI dinner.

The board of the layer-fMRI dinner group has invited the following speakers to initiate discussions on the theme: Layer-fMRI signal origin: From neurons to vessels to BOLD.

- Amir Shmuel (McGill): The complexity of lamina resolved neuronal activity, and the spatial specificity of BOLD, CBV, arterioles and venules responses: implications for planning and interpreting depth-dependent fMRI.

- Jonathan Polimeni (MGH): Biophysical modeling for interpreting fMRI signals and relating them back to neuronal activity: contemplating the “inverse problem”.

- Evelyn Lake (Yale): Leveraging simultaneous multi-modal fMRI and wide-field optical imaging to study functional brain networks.

Moderated by Luca Vizioli and Andrew Morgan

Board: Johanna Bergmann, Avery Berman, Saskia Bollmann, Denis Chaimow, Renzo Huber, Nils Nothnagel, René Scheeringa, and Bianca van Kemenade.

The entire event will last for about 90 min (including discussion).

The meeting will be recorded and published on Youtube and embedded on this website by October 7th 2021.

Everyone is welcome. No registration required. Zoom link: https://laminauts.page.link/meeting_channel

| Brisbane | Korea | Germany | UK/UTC | New York | Minnesota | San Francisco |

| Oct 6th | Oct 6th | Oct 6th | Oct 6th | Oct 6th | Oct 6th | Oct 6th |

| 11pm | 10pm | 3pm | 2pm | 9am | 8am | 6am |

Introduction

Jonathan Polimeni (MGH)

Biophysical modeling for interpreting fMRI signals and relating them back to neuronal activity: contemplating the “inverse problem”.

Abstract:

The ultimate limits of spatial and temporal resolution achievable by fMRI are dictated by neurovascular coupling, the mechanisms of blood flow regulation, and vascular architecture in the brain. While these limits are currently unknown, there is a rapidly growing body of evidence pointing to the ability of fMRI to distinguish site of activation across cerebral cortical depths, which can be used to infer the cortical layer or layers differentially engaged in specific tasks or functional networks. Because all fMRI signals currently in use are based on hemodynamics and hence are influenced by local vasculature, understanding how patterns of neural activity are transformed into the fMRI signals we measure can potentially aid not only in the interpretation of our data but also opens possibilities to better estimate the location (in space and time) and amplitude of the neural response from the fMRI response—to the extent that this transformation is “invertible”.

Motivated by this, the goal of this presentation is to survey recent work towards building biophysical models of the fMRI signals to help with this interpretation, with a focus on models using realistic microvascular networks and dynamics based on optical imaging and microscopy data. These models are built on first principles and are described by meaningful anatomical and physiological parameters. I will present initial results demonstrating how these models can be used to predict well- known differences in the hemodynamic response across stimulus configurations and cortical depths. While these models are complex, and simulations are computationally intensive, they can be also used to help inform simpler “lumped” models that are more practical for routine use, and are applicable to predicting various BOLD and non-BOLD fMRI contrasts.

Another goal of this presentation is to engage the laminar fMRI community and have an open discussion about the strengths and weaknesses of this modeling approach, consider these against other approaches to improve neural specificity in fMRI, and discuss how to combine this framework with advanced acquisitions and analysis methods towards our shared objective to measure neural activity across cortical layers with fMRI.

The complexity of lamina resolved neuronal activity, and the spatial specificity of BOLD, CBV, arterioles and venules responses: implications for planning and interpreting depth-dependent fMRI

Leveraging simultaneous multi-modal fMRI and wide-field optical imaging to study functional brain networks.

Discussion

Layer-fMRI Analysis Project 2025

Conducted in October 13-14th 2025

Coordinator: Jonathan Polimeni, Renzo Huber, and Luca Vizioli

Training Faculty: Laurentius Huber, Rainer Goebel, Anna Izabella Blazejewska, Luca Vizioli, and Jonathan Polimeni

One dataset, many analyses: an overview of the diverse processing approaches in layer-fMRI.

The layer-dinner group would like to invite you to show us your analysis pipeline in a brief presentation at an upcoming “Layer-fMRI dinner” in the Spring of 2022. The analysis of layer-fMRI data is challenging and not straightforwardly doable with standardized streamlines analysis packages. Most layer-fMRI groups have their own dedicated analysis solutions to account for layer-specific challenges. As such, the purpose of this event is:

- To illustrate multiple layer analyses of members of the field, and for others to follow.

- To highlight challenges of high-res and layer specific analysis.

- To stimulate discussion about analysis challenges and solutions.

- To give analysis developers a platform to advertise their analysis solutions.

- To illustrate differences and similarities of pipelines.

Introduction by Jonathan Polimeni

Rainer Goebel: Brain Voyager

Lecture

Hands on

Renzo Huber: LayNii & AFNI

Lecture

Hands on:

Anna Blazejewska: Freesurfer

Lecture

Hand on instructions

For the BrainVoyager hands-on session by Rainer Goebel:

- Please download the free educational version of BrainVoyager here (links will work shortly): For macOS (Apple Silicon ‘M’ chips): http://download.brainvoyager.com/temp/layer-fmri-course-cmrr/BrainVoyager_EDU_24.2-b2_arm64_Installer.pkg

- For Windows users (or Mac users that want not to update to the new ‘b2’ version, they should download and unzip the following file: https://download.brainvoyager.com/temp/layer-fmri-course-cmrr/Layer-fMRI-Course-CMRR-2025-data-reg-update.bin.zip

- All users should also download the following zip files with intermediate data files if possible:

- https://download.brainvoyager.com/temp/layer-fmri-course-cmrr/Prepared-Anatomical-Files.zip

- https://download.brainvoyager.com/temp/layer-fmri-course-cmrr/sub-demo_Avg-4runs-03-to-06_bold_AP_undist.zip

- https://download.brainvoyager.com/temp/layer-fmri-course-cmrr/Coreg-files.zip

- https://download.brainvoyager.com/temp/layer-fmri-course-cmrr/sub-demo_MP2RAGE_UNI_defaced_reframed_tissue-probs-slow.vmp.zip

For the LayNii-Afnii hands-on session by Renzo Huber, please download and install the following programs:

- LayNii: https://github.com/layerfMRI/LAYNII and https://github.com/layerfMRI/LAYNII/tree/master/ida

- AFNI binaries: https://afni.nimh.nih.gov/pub/dist/doc/htmldoc/background_install/download_links.html

- ITK-snap: https://www.itksnap.org/

For the FreeSurfer hands-on session by Anna Blazajewska, there are no prior preparations needed. You will get server access. You can access the server with a vnc viewer.

- Mac users can use the native VNC client.

- Windows users will need to install a client such as TightVNC Viewer (https://www.tightvnc.com).

- Instructions on how to use it are here.

The data that we will work with are below:

- Main dataset https://doi.org/10.7910/DVN/UPJZZS

- Zenodo mirror: https://doi.org/10.5281/zenodo.17298902

- Mirror as Dropbox: https://www.dropbox.com/scl/fo/xx72q9om8tx2lg785j9ux/ADBT4UmsvyEd2_UKCJhCeag?rlkey=n7i91qrasicp4m6npub22xt3y&st=s51cf212&dl=1

Mirror as Gdrive (not recommended as it might run into download quota): https://drive.google.com/drive/folders/18joR_Kvil9OK13lZNMmbfOj_WYnETxqU?usp=sharing

Updates will follow

Third layer-fMRI dinner: Cognitive Models and Cortical Layers.

On April 20th 2021, the third virtual layer-fMRI took place. 120 (unique) attendees joined and discussed the connection between layer-fMRI and cognitive models.

This meeting is held as a succession of the first two virtual dinner in May 2020, and Sept 2020:

In this third event, it will be discussed how the layer-fMRI methodologies might be able to inform Cognitive models. The three speakers are researchers that are working to examine cognitive processes whose study is aided by understanding the structure and function of cortical layers. These cognitive processes could include memory, attention, learning, dreaming, language or cortical predictions (plus many, many more!)

Floris de Lange will give an overview of work done by his group to capture laminar fMRI activity changes in the visual cortex for prediction, attention and bottom-up input. André Bastos will present results of laminar LFP recordings and how feed-forward gamma-band and feedback alpha/beta band modulations help to understand cognitive effects including attention, working memory, and prediction processing. Michelle Moerel will talk about how computational models can be combined with laminar fMRI to understand human auditory processing.

Below you find the important links of the virtual event. Embedded videos of the talks, discussions, and a summary of the hot topics are going to be added on the day after the event.

Continue reading “Third layer-fMRI dinner: Cognitive Models and Cortical Layers.”Second Layer-fMRI dinner: Laminae in the brain; fMRI vs. electrophysiology

On Sept 28th 2020, the second virtual layer-fMRI event is scheduled.

This meeting is held as a succession of the first virtual dinner in May 2020: https://layerfmri.com/virtualevent1/

In this second event, it will be discussed how the research field can bridge the gap between layer-dependent activity measures that are obtained with fMRI and electrophysiology, respectively. Kamil Ugurbil will present the perspective of high resolution for human neuroscience, Lucia Melloni will present the perspective of depth-dependent electrophysiological recordings in humans, and Seong-Gi Kim will talk about the combination of both worlds, layer-fMRI and layer-dependent electrophysiological recordings.

Below you find the important links of the the virtual event. Embedded videos of the talks, discussions, and a summary of the hot topics are going to be added on the day after the event.

Continue reading “Second Layer-fMRI dinner: Laminae in the brain; fMRI vs. electrophysiology”

First layer-fMRI Dinner: Layer-fMRI contrasts

On May 7th 2020, there was the first virtual layer-fMRI dinner event to discuss current issues in the field.

This meeting was held as a replacement of an originally planned layer-fMRI dinner at ISMRM and happened in succession of an earlier in-person layer-fMRI dinner in November 2019 (meeting minutes here).

Below you find the important links of the the virtual event, videos of the talks and discussions, and a summary of the hot topics that were discussed.

- The meeting was organized by Luca Vizioli and Renzo Huber. And it was supported by CMRR (Essa Yacoub and Kamil Ugurbil) as well as the Maastricht-York partnership grant (PIs: Aneurin Kennerley and Renzo Huber).

- There were 149 participants + 4 speaker!

- The layerfMRI slack-channel of the network has been opened to everyone and can be joined here: https://tinyurl.com/cdrfmri1.

- The content of the next meeting will be determined by results of the survey here: https://layerfmri.page.link/meeting_survey. The meeting is scheduled for early July as of May 8th, it looks like most people prefer to talk about analysis challenges.

Hot topics that were discussed

- How do we estimate sensitivity and specificity of a sequence?

- Validations that layer-specific fMRI signals are explainable by electrophysiology are necessary.

- How important is it to consider arterial artifacts for layer-fMRI signal interpretation?

- How are inflow effects considered in different layer-fMRI readout schemes?

- Can maps of physiological noise be helpful for segmentation and/or registration?

- What do maps of non-gaussian noise represent?

- The major limitation of layer-fMRI is still the resolution! Can we go to smaller voxels? How?

- How can we make use to layer-fMRI in non-primary areas? And how applicable is it?

- How can we make use of layer-fMRI in pathology? E.g. vascular diseases.

- The combination of experimental setup and acquisition contrast is important.

- Layer-fMRI is depth-dependent fMRI.

Talks and Discussion of the virtual event

All slides can be downloaded here: https://doi.org/10.5281/zenodo.3874364

Next meeting

The next virtual layer-fMRI dinner will tentatively be on September 28th (Europe and America) and September 29th (in Asia) on the topic on layer-fMRI vs. electrophysiology. with speakers including Kamil Ugurbil and Seong-Gi Kim.

2019 Minnesota workshop on Cortical Depth-Resolved fMRI Methods

This post summarizes the presentation, tutorials, and discussions of the 2019 UHF Minnesota Workshop on Cortical Depth-Resolved fMRI Methods, Nov 12th-Nov 13th.

Organiser: Cheryl Olman

Presenters: Alessio Fracasso, Natalia Petridou, Jonathan Polimeni, Kamil Uludag, Tim van Mourik, and Renzo Huber

Terminology consensus 🙂

- Layerification: The process of assigning depth-values to each voxel.

- Lettuce Head: Levelset with acoustic noise.

- Partial Volume: Ill-defined term for partial coverage. A field of view that is smaller than what freesurfer considers as “whole brain”.

Seong-Gi Kim: Layer-fMRI can help to investigate directional circuits.

Kendrick Kay: 17 / 19

taking the early response might help to be more specific

Kendrick Kay lists layer-fMRI methods with improved specificity

of course its snowing

new layer-fMRI scanners to come

messing with the biggest 7T machine room in existance

Jonathan Polimeni reminds everyone that there is still a lot to do for us.

Is layer-fMRI too easy?

Last planning session in the night before the workshop

Natalia Petridou discusses the advantage of CAIPI acceleration in sub-millimeter segmented 3D-EPI

Workshop Content

Cheryl Olman: Introductions and general outline:

Jonathan Polimeni: Overview of laminar fMRI best practices and current challenges

Part 1:

Part 2:

Hot Topic discussions included:

- How many cytoarchitectonically-defined layers are there, is 6 really a good number?

- How should we estimate the PSF?

- Resolution losses from resampling should be kept as small as possible. Viable strategies include: 1.) working in upsampled space, 2.) combining (concatenating) all transformations into one single transformation, 3.) using adequate interpolation functions.

- Layer smoothing can be helpful to depict layer activation features, but they should always be accompanied with unsmoothed maps. Otherwise it can result in circularity.

- “shifting” the cortical depth based on the functional baseline signal a la Peter Koopmanns might introduce circularity. Tim: this approach is debunked.

- The accuracy of the surfaces (segmentation lines) is significantly higher than the voxel resolution. This is possible with predefined assumptions of signal intensities of GM and WM and a partial voluming model. -> higher resolution of the anatomy helps to improve the accuracy. However, it’s more important to keep the SNR up.

Renzo Huber: Hands on scanning at 7T scanning: Optimizing an EPI acquisition

- Scanner protocols are here: https://github.com/layerfMRI/Sequence_Github/tree/master/CMRR_training_scann_protocol_pdfs

- Explanatory slides are here: https://layerfmri.page.link/CMRR_2019_scanning

- Example data are here: https://openneuro.org/datasets/ds001563/versions/1.0.1

- Hot topic discussions were:

- Does GRAPPA-regularization blur the data between neighboring voxels or between GRAPPA ghosts?

- How to do phase-correction with navigators? Can we trust the EPI phase across time?

Tim van Mourik, Using GIRAFFE to set up analysis pipeline (boundary-based registration)

Part 1:

Part 2:

- Discussion of Giraffe Tools: https://giraffe.tools/porcupine/TimVanMourik/LayerAttention

Alessio Fracasso: Hands on analysis: Segmentation and layerification without surfaces

- Digital capture failed; we’re working on creating a replacement

- Hot topic discussion:

- The presented pipeline estimates layers with level-sets, without surfaces in voxel space.

fMRI contrasts: GE-BOLD, SE-BOLD and non-BOLD

- Natalia Petridou: GE, SE, GRASE

- Hot Topic discussion:

- GRASE has the advantages of both GE-BOLD and SE-BOLD, or does GRASE have the disadvantages of both GE-BOLD and SE-BOLD?

- Different layers and different contrasts have different timing response functions.

- Renzo Huber: non-BOLD (VASO, ASL …)

- Hot topic discussions:

- There is no clear winner of sequences. Sequence comparisons are never fair.

Kamil Uludag: T1-weighted EPI and laminar BOLD response modeling

- Hot topic discussions:

- There is no easy ground-truth of tissue type segmentation. When comparing methods, one needs to look at both approaches.

- -> taking the difference between task conditions and using the layer-dependent activation difference for neuroscience interpretations is not adequate <- This does not mean that previous studies, who did this are necessarily wrong.

- The vein size difference across layers can be incorporated in the model as CBV.

- The vascular deconvolution method might come along with noise-amplification. When you have unreliable data quality to begin with, the deconvolution model might make more problems than it solves.

- The surprising CBF profiles are in agreement with previous studies from Ingo Marquardt and from electrophysiology.

Natalia Petridou: 3D-EPI

- Hot topic discussions:

- It is not so straightforward to correct for physiological noise when you have long readouts in 3D-EPI. The most appropriate approach is to take it as a snapshot acquisition at k-space center.

- K-space based approaches like RetroKCor might be more appropriate for 3D-EPI

- It is not clear, why the physiological noise should become less severe in the thermal noise dominated regime? It’s more important how big the physiological noise is with respect to the BOLD magnitude? It’s less important how big the physiological noise it with respect to the thermal noise?

- Offline-discussion with Natalia Petridou: Motion is the single biggest limitation in high-res fMRI. The most effective way to minimize motion is to engage the participant. E.g. reward, if motion is low. E.g. penalty-based longer time in the scanner (repetition of runs), when motion is large.

Renzo Huber: Hands on Analysis: Layerification with LAYNII

- Handout, further material, example data, and further instructions are here: https://layerfmri.page.link/Layerification

- Recording Part 1:

- Recording Part 2:

- Hot topic discussions:

- How many layers should be extracted?

- Renzo Huber: extract as many layers as possible (potentially after upsampling).

- Tim Van Mourik: extract as many layers as independent samples across cortical depth.

- Consensus among all: the least subjective choice is to have as many layers as voxels. These layers are sparse and non-independent.

- Consensus among all: any number of layers is ok.

- Which interpolation function should one use to work in upsampled space

- Consensus is that nearest neighbor is not adequate because it assumes that the signal would be equally distributed within the voxel.

- Most adequate interpolation function would be zero-filling in k-space, which corresponds to sinc-interpolation in image space.

- Consensus among all: if the result depends on the interpolation function, we shouldn’t trust the result to begin with.

- How should we do statistics with sparse and non-independent voxel sampling across depth?

- Consensus among all: This is an unsolved problem. We don’t even know which signal magnitude to trust. Thus, it’s even less clear, how to do statistics with it.

- Why is it such an obstacle, if a software package has dependencies to GSL. Future versions of LAYNII should not be dependent on it?

- It shouldn’t be so hard to make LAYNII compatible with nii.gz, Future versions should be able to read nii.gz.

- The advantages and disadvantages of equi-volume and equi-distance approaches where discussed. Renzo advises to use equi-distance. While it contains negligible biases with respect to the cyto-layers, it does not come along with noise amplification as equi-volume.

- How many layers should be extracted?

Cheryl Olman: A “complete” scanning session (MP2RAGE, some 3D GE EPI comparisons, T1-EPI)

- Hot topic discussions:

- Setup of T1-EPI, how to analyze it correctly?

Alessio Fracasso: Surface-based visualizations/partial brain segmentations

- Hot topic discussions:

- Looking at EPI data in anatomical space.

- How to minimize curvature bias of segmentation -> higher resolution.

Cheryl Olman: Discussion sessions throughout the workshop:

- What are the most important challenges of layer-fMRI?

- Nominal resolution is not the same as effective resolution

- There is no ground truth of quantifying the effective resolution (acquisition, biological, resampling).

- Layerification is hard with distortion and registration challenges.

- Anatomical segmentation

- The biggest challenges were obtained in survey from ISMRM study group: https://doi.org/10.7490/f1000research.1115658.1

- What should every manuscript include?

- All standard sequence parameters must be reported. Furthermore, parameters of echo-train length, partial Fourier etc. should be mentioned too.

- Images of EPI data quality, e.g. representative tSNR maps, activity maps in native EPI space.

- Data of segmentation quality and registration quality should be shared.

Cheryl Olman: Wrap-up discussions:

- Where do we want to host workshop content?

- We’ll have a YouTube channel with recordings from this week: https://www.youtube.com/playlist?list=PLuA0pYRPZ4uAtJonp83YjXFtpqJ0kUADB

- Renzo offers to put meeting minutes and link collection of workshop material on layer-fMRI blog.

- Example data from the workshop will remain on the CMRR server for another while.

- How to continue discussions:

- Active members of the community (who know how to use SLACK) will continue discussions on the SLACK workspace depthresolvedfmri.slack.com, This channel will be open to every layer-enthusiast (in an invitation basis). If you have not received an invite yet, please contact us.

- Parts of the discussions will be mirrored on layerfMRI.com, including:

- Meeting minutes

- Continuously updated list of layer-fMRI papers (with a focus on human fMRI).

- List of job opportunities in layer-fMRI.

- List of layer-fMRI abstracts of current conferences.

- Do we want a white paper on a set of QC metrics (tSNR in ROI, true image resolution in RO/PE/SL directions, ?) that can be used to compare acquisitions?

- Response from all: Maybe

- Cheryl will contact the field about this soon.

- As opposed to the field of ASL, we don’t have a 20 year ongoing discussion or well-established agreed-upon standards. Thus, it might be challenging. But there will probably be a basic set of agreed best practices.

- Future satellite meetings: We want to keep organizing satellite meetings and informal meet-ups at conferences like ISMRM (Who volunteers? Who will attend? (e.g. Renzo and Luca?)), OHBM (-> Amir Shmuel), SfN and the BRAIN Investigators meeting (-> Sean Marrett).