Authors: Renzo Huber and Faruk Gulban

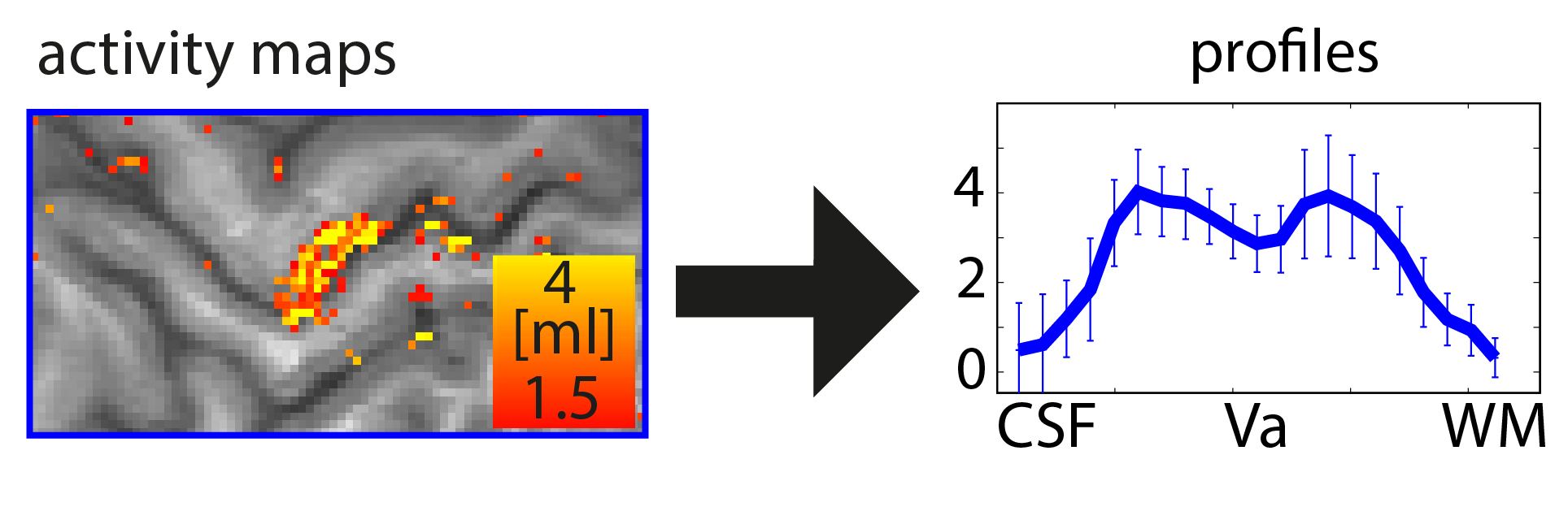

When you want to analyze functional magnetic resonance imaging (fMRI) signals across cortical depths, you need to know which voxel overlaps with which cortical depth. The relative cortical depth of each voxel is calculated based on the geometry of the proximal cortical gray matter boundaries. One of these boundaries is the inner gray matter boundary which often faces the white matter and the other boundary is the outer gray matter boundary which often faces the cerebrospinal fluid. Once the cortical depth of each voxel is calculated based on the cortical gray matter geometry, corresponding layers can be assigned to cortical depths based on several principles.

One of the fundamental principles used for “assigning layers to cortical depths” (aka layering, layerification) is the equi-volume principle. This layering principle was proposed by Bok in 1929, where he tries to subdivide the cortex across little layer-chunks that have the same volume. I.e. gyri and sulci will exhibit any given layer at a different cortical depth, dependent on the cortical folding and volume sizes (see figure below).

With respect to applying equi-volume principle in layer-fMRI, the equi-volume layering has gone through quite a story. A plot with many parallels to Anakin Skywalker.

In this blog, the equi-volume layering approach is evaluated. Furthermore, it is demonstrated how to use it in LAYNII software.

Continue reading “Equi-voluming: The Anakin Skywalker of layering algorithms” →